Face Tracking

Face tracking using a webcam or iPhone

Motion Capture for VRC Avatar Viewer (hereafter "the capture app") can also reflect facial expressions such as blinking and mouth movements onto the avatar. You select the method of capturing facial expression data and the format for sending it to the avatar.

Two Data Sources (Capture Methods)

- Webcam (default) Estimates facial expressions from the video of a webcam connected to the PC. No additional equipment is needed and anyone can use it, but it has limitations such as not being able to capture cheek or tongue movements.

- iPhone (iFacialMocap) Using a Face ID-compatible iPhone with iFacialMocap (a paid iOS app), you can capture 52 types of high-precision facial expression data based on ARKit. The expressiveness greatly exceeds that of a webcam.

The format used to send the captured facial expression data to the avatar is selected via "Facial Expression Send Format" (see Step 3 for details).

- When iFacialMocap is connected, iFacialMocap's facial expression data takes priority over the webcam's (body movements remain from the webcam)

- Regardless of the send format, if iFacialMocap is enabled, its facial expression data will be used

- For details on VRChat integration, see the Face Tracking in VRChat guide

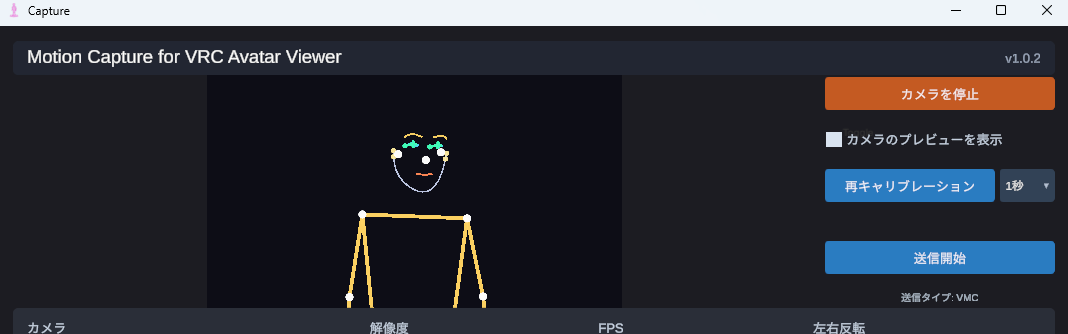

Capture Facial Expressions with a Webcam

This is the default method. If you start the camera following the steps in the Streaming Mode Guide, facial expressions will automatically be estimated from the webcam. No additional configuration is required.

1-1. Expressions That Can Be Captured

- Blinking (each eye individually)

- Vowels (A, I, U, E, O)

- Gaze direction (up, down, left, right)

- Eyebrow raise/lower, mouth open/close, mouth corner movement, etc.

1-2. What Cannot / Is Difficult to Capture

- Cheek puffing, tongue movement

- Accuracy drops significantly in dark environments. Sufficient brightness for the face to be clearly visible is required

- Angles where the front of the face is not visible, such as profile or looking down

- When using a webcam alone, VRM Standard is recommended. It works naturally with most avatars

- Perfect Sync and VRCFT also work. However, as noted above, some movements cannot be captured.

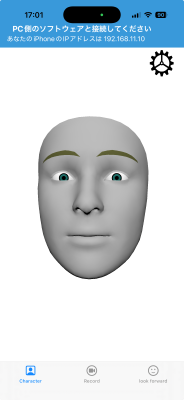

Capture High-Precision Expressions with iPhone (iFacialMocap)

Using a Face ID-compatible iPhone (iPhone X or later) significantly improves the precision of facial expressions compared to a webcam. The iOS app iFacialMocap is required.

2-1. Requirements

- A Face ID-compatible iPhone or iPad Pro

- iFacialMocap purchased from the App Store (paid iOS app)

- The iPhone and PC must be connected to the same Wi-Fi (LAN)

2-2. Setup on the iPhone Side

- Launch iFacialMocap on your iPhone

- The screen will display "Please connect with the PC software", so make a note of the IP address (e.g.,

192.168.1.10)

- Open iFacialMocap's settings and check "Destination Settings". If a value is entered in "Destination IP Address", it will not be displayed. Press "Reset Destination Settings" below it and restart the app to display it.

2-3. Setup on the Capture App Side

- Enter the iPhone's IP address you noted into the "iFacialMocap IP Address" field in the capture app

- Turn ON "Use iFacialMocap"

- If the status next to it changes to "Receiving", the connection is successful

2-4. Meaning of Status Display

| Status | Meaning |

|---|---|

| Stopped | The "Use iFacialMocap" toggle is OFF |

| IP Not Entered | The iPhone's IP is blank. Once entered, it will automatically attempt to connect |

| Waiting for Connection | Handshake was sent but there's no response from the iPhone (iFacialMocap not running / wrong IP / different network, etc.) |

| Receiving | Facial expression data is being received normally |

2-5. Expressions That Can Be Captured

52 ARKit-based blendshapes + head rotation + left/right gaze can be captured. This includes cheek puffing and tongue movements that cannot be captured by webcam (the avatar must have the corresponding blendshapes).

- Even while iFacialMocap is enabled, body, arm, and finger movements continue to be captured from the webcam

- This is a hybrid configuration: "Facial expressions from iFacialMocap, body from webcam"

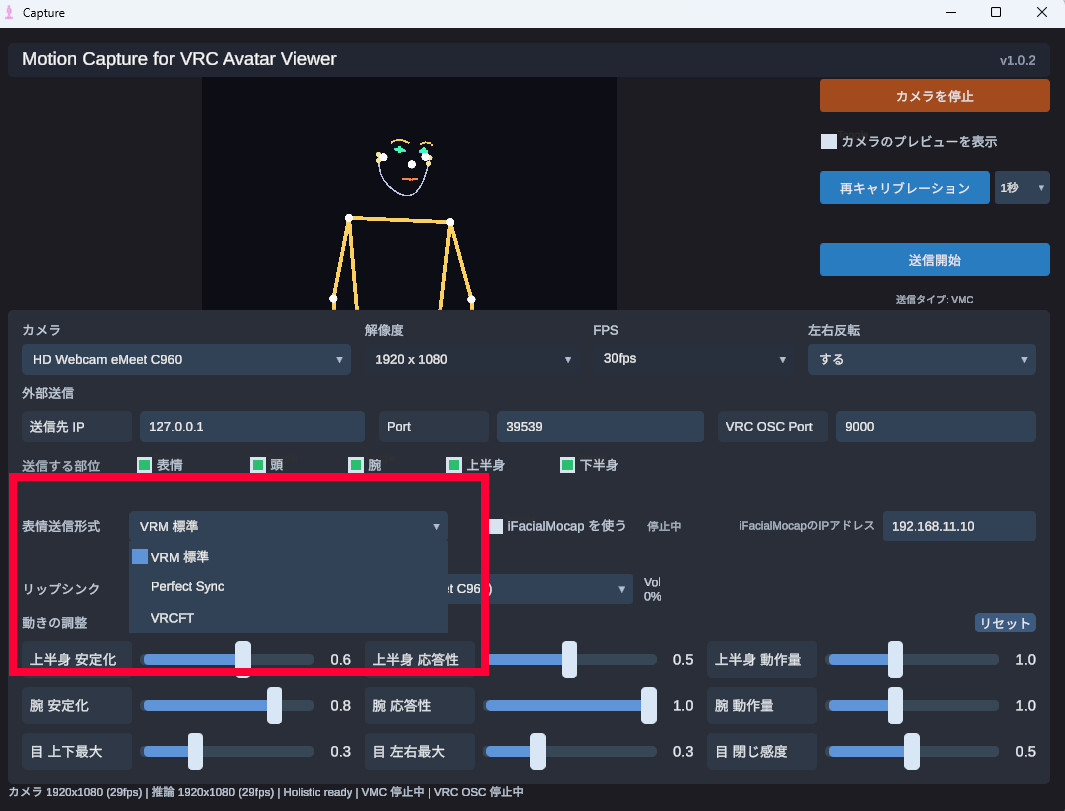

Choose a Facial Expression Send Format (Blendshape Standard)

Select from the "Facial Expression Send Format" dropdown in the capture app. Choose according to what your avatar supports.

VRM Standard

Sends basic facial expressions that VRM natively has, such as blinking, vowels, and gaze, via VMC. Works with any avatar, but the expression is simple. This is the default recommendation when using a webcam alone.

Perfect Sync

Sends 52 ARKit blendshapes via VMC. On Perfect Sync-compatible avatars, even subtle movements of mouth corners, eyebrows, and eyelids are reflected. It is effective when combined with iFacialMocap.

VRCFT

Uses the Unified Expressions standard of VRCFaceTracking 5.x, and is sent simultaneously to both VMC and VRChat OSC. Its key feature is that the same facial expression can be reproduced in both your VRChat avatar and the viewer (e.g., for OBS streaming).

- When VRCFT is selected, the caption below the "Start Sending" button changes to "Send Type: VMC + VRChat OSC"

- For detailed VRChat integration steps, see the Face Tracking in VRChat guide

- The "Facial Expression Send Format" on the capture app side and the "Type" on the Viewer side must be set to the same value (see Step 4 for details)

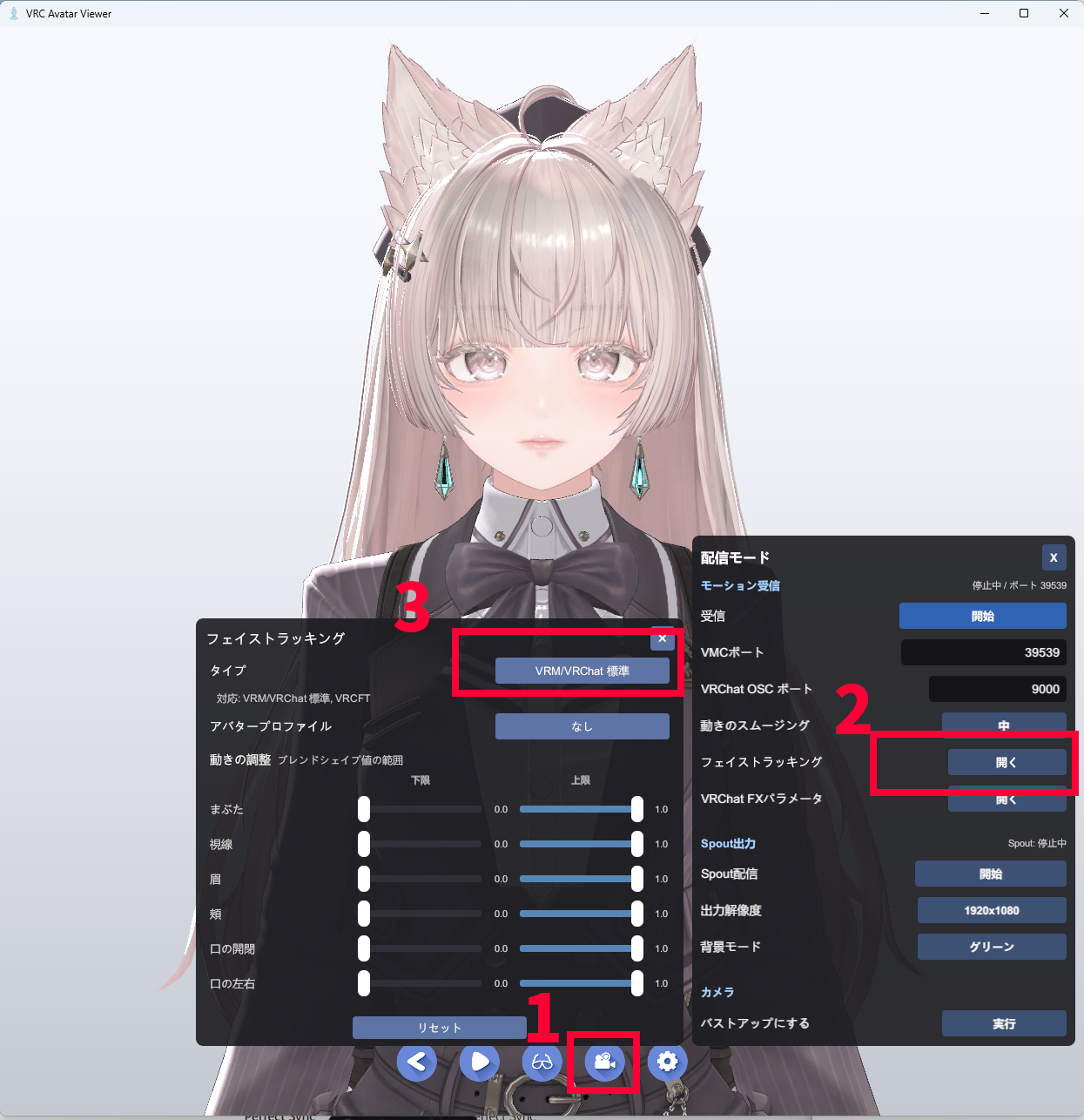

Choose the Face Tracking Type on the Viewer App Side

The "Facial Expression Send Format" selected on the capture app side and the "Type" selected on the Viewer app side must be set to the same value. The type on the Viewer side is switched from the window opened via the streaming mode toolbar → "Face Tracking" button.

4-1. Open the Window

- Display the avatar in the Viewer app

- Turn on Streaming Mode

- Click the "Face Tracking" button in the streaming panel

4-2. Switch the Type

Each click of the "Type" button at the top of the window cycles through the options in the order VRM/VRChat Standard → Perfect Sync → VRCFT. Make sure it matches the selection on the capture app side.

| Viewer "Type" | Capture App "Facial Expression Send Format" |

|---|---|

| VRM/VRChat Standard | VRM Standard |

| Perfect Sync | Perfect Sync |

| VRCFT | VRCFT |

4-3. How to Read the "Supported:" Display

Directly below the "Type" button, a list of the types supported by the currently loaded avatar is displayed, like "Supported: VRM/VRChat Standard, Perfect Sync".

4-4. Notes

- The category-based facial expression intensity sliders are disabled while VRCFT is selected: Because VRCFT drives expression parameters directly via VRChat OSC, the Viewer's blendshape intensity adjustments are not applied