Streaming Mode (VMC connection, OBS integration)

Animate your avatar with a webcam and motion capture

By combining VRC Avatar Viewer with Motion Capture for VRC Avatar Viewer (hereafter "capture app"), you can animate your avatar with just one webcam. You can also use it for streaming with OBS Studio or similar software. This guide walks you through the setup in the following steps.

The capture app processes all camera footage entirely locally. Nothing is sent externally. Additionally, no external communication takes place other than VMC or VRChat OSC communications explicitly initiated by the user.

- Track your movements with the capture app

- Send the data to VRC Avatar Viewer via the VMC protocol to animate your avatar

- Capture VRC Avatar Viewer in OBS and use it as a streaming source (optional)

Requirements

- VRC Avatar Viewer (main application)

- Motion Capture for VRC Avatar Viewer (capture app / available on BOOTH)

- Webcam (720p or higher recommended)

- Streaming software such as OBS Studio (optional)

VMC / UDP→ [VRC Avatar Viewer] →Spout / Transparent Window→ [OBS]

- This guide first explains a setup where everything runs on a single PC. If you want to use separate PCs for streaming and capture, there is a note at the end of Step 2

- For instructions on installing VRC Avatar Viewer itself and loading avatars, please read From Avatar Export to Display first

Set Up the Capture App

The capture app is a Windows application that estimates full-body movement (body, arms, fingers, and facial expressions) from webcam footage and transmits it externally via the VMC protocol.

1-1. Download and Launch

- Download the capture app ZIP from the BOOTH distribution page

- Extract the ZIP and double-click

Capture.exeto launch it

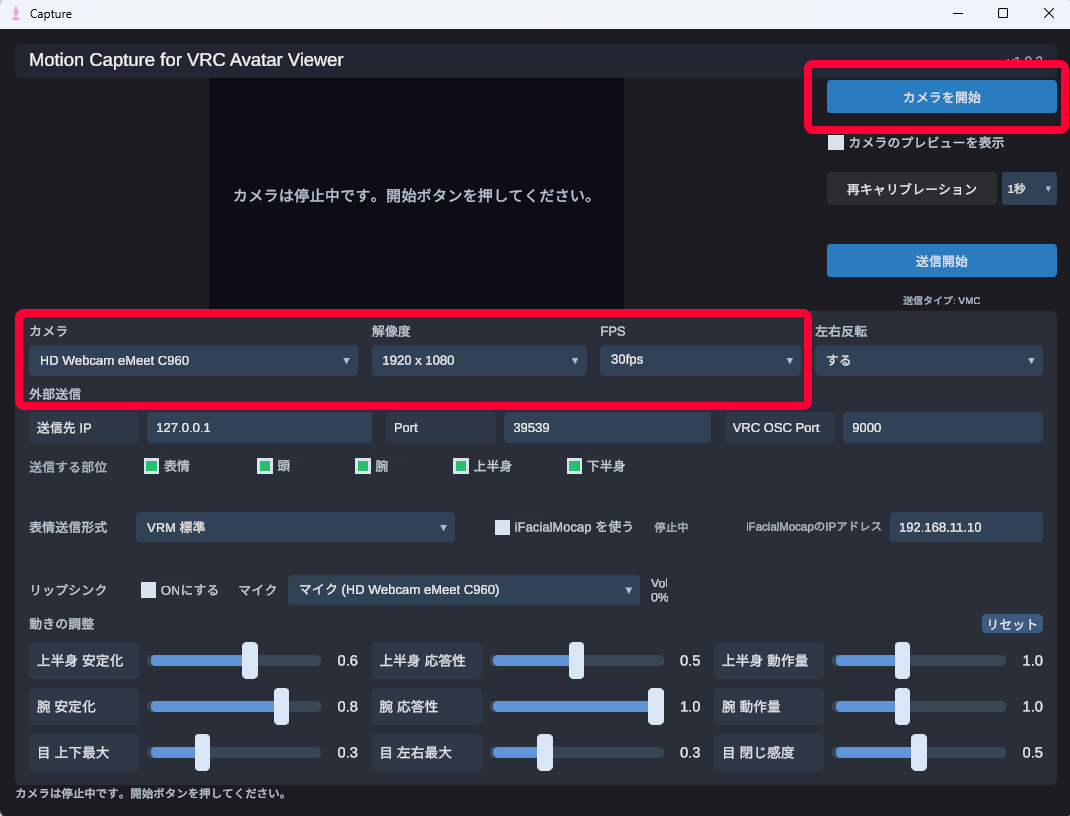

1-2. Start the Camera

- Select your "Camera", "Resolution", and "FPS"

- Click the "Start Camera" button

- Once you appear in the preview, stand in front of the camera and hold still for 1–2 seconds (initial calibration)

- Position yourself so your entire body fits within the frame. Upper body only will still work, but full-body tracking requires your full body to be visible

- Keep the background as simple as possible and ensure the lighting is bright enough for your face to be clearly visible

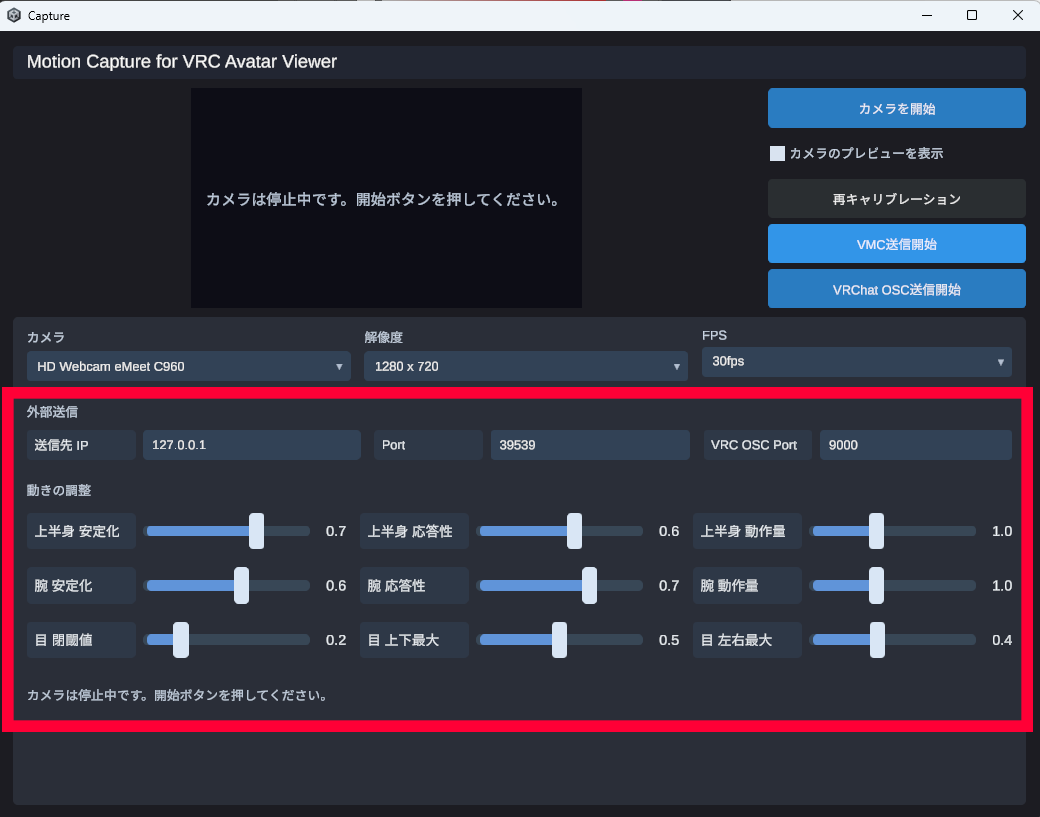

1-3. Adjust Movements

If the preset doesn't work well, you can adjust using the sliders at the bottom of the screen. The following three parameters can be adjusted for each section: Upper Body / Arms.

| Parameter | Effect |

|---|---|

| Stabilization | Reduces jitter (higher values increase tracking lag) |

| Responsiveness | How closely sudden movements are followed (higher values make movement more agile) |

| Motion Amount | Amount of output movement (lower values make movements more subtle) |

Settings are automatically saved when the app is closed.

1-4. When There Is a Mismatch Between Your Pose and the Avatar's Pose

Press the "Recalibrate" button to reset the reference pose after a 3-second countdown.

1-5. Choose Which Body Parts to Send

Using the "Body Parts to Send" checkboxes on the right side of the screen, you can individually toggle ON/OFF which body parts are reflected on the avatar. Parts that are turned OFF will remain stationary (in their default pose).

| Body Part | Description |

|---|---|

| Facial Expressions | Face tracking results including blinking, mouth, and gaze |

| Head | Head orientation (neck rotation) |

| Arms | Movement of both arms and fingers |

| Upper Body | Torso up/down and forward/back tilt, shoulder movement |

| Lower Body | Hip and leg movement |

- When streaming while seated in a chair, turning Lower Body OFF helps prevent false detections of standing or sitting

- If you are using a separate tool for facial expression tracking (such as VRCFaceTracking), turning Facial Expressions OFF avoids conflicts

1-6. Lip Sync

This feature generates mouth movement (lip sync) from microphone audio. It responds faster than webcam face tracking and produces more natural mouth movement. It is useful for singing streams or when you want to stream while wearing a mask.

How to Use

- Turn ON the "Enable" toggle in the "Lip Sync" section

- Select the input device you want to use from the "Microphone" dropdown

- Speak and confirm that the volume meter next to it responds

- While lip sync is ON, the microphone volume and vowel analysis results take priority over webcam mouth movement

- If the microphone is not responding, check in Windows Sound settings that the input device is enabled

- The selected microphone is saved in PlayerPrefs and will be restored on the next launch

Connect to VRC Avatar Viewer and Animate Your Avatar

Motion data is sent from the capture app to VRC Avatar Viewer using the VMC protocol (OSC over UDP). Simply enable reception on the VRC Avatar Viewer side and specify the destination on the capture app side to establish the connection.

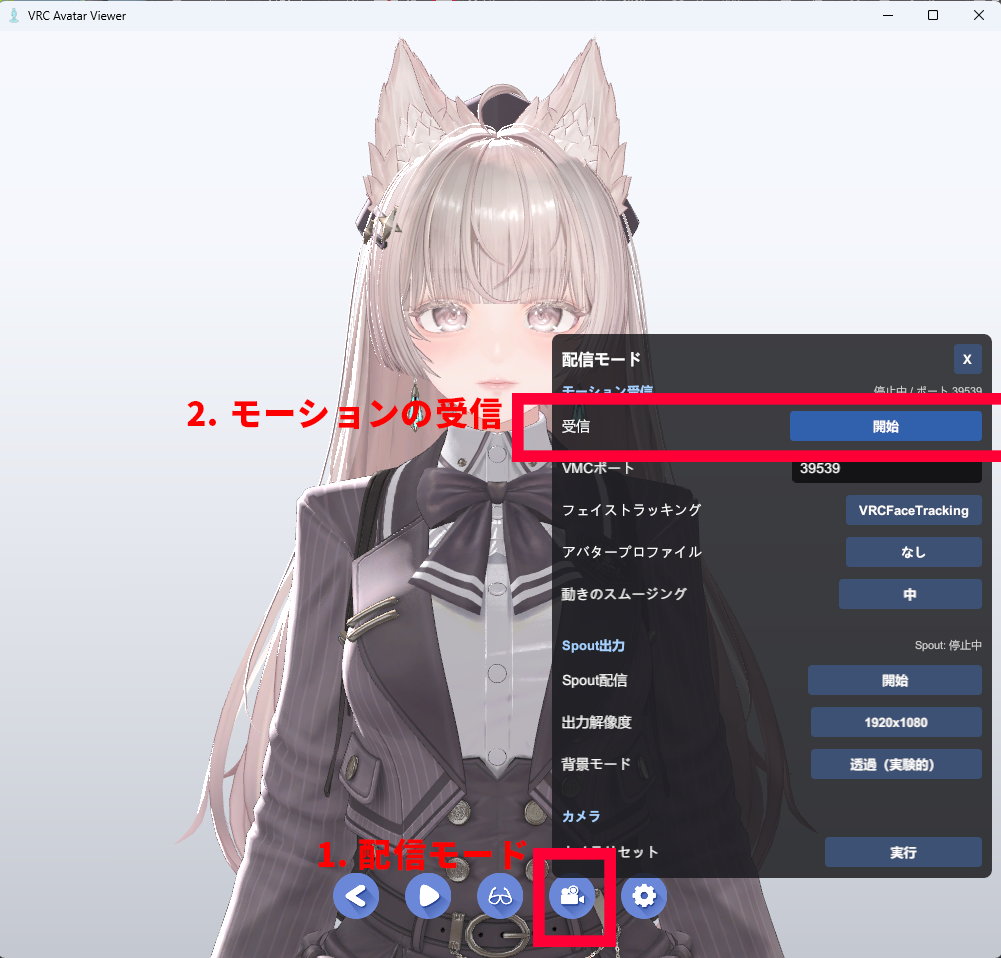

2-1. VRC Avatar Viewer Side: Enable VMC Reception

- Display your avatar in VRC Avatar Viewer

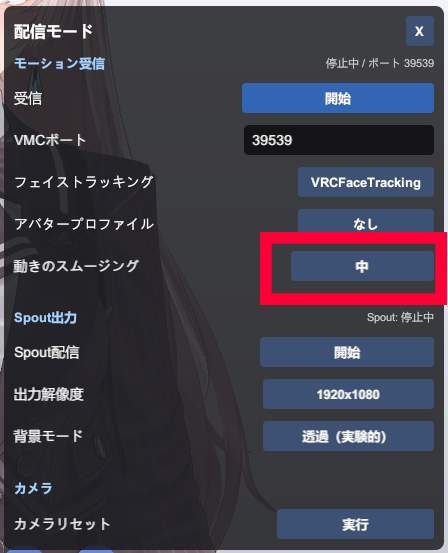

- Click the Streaming Mode button in the toolbar

- Turn "VMC Reception" ON (default port:

39539)

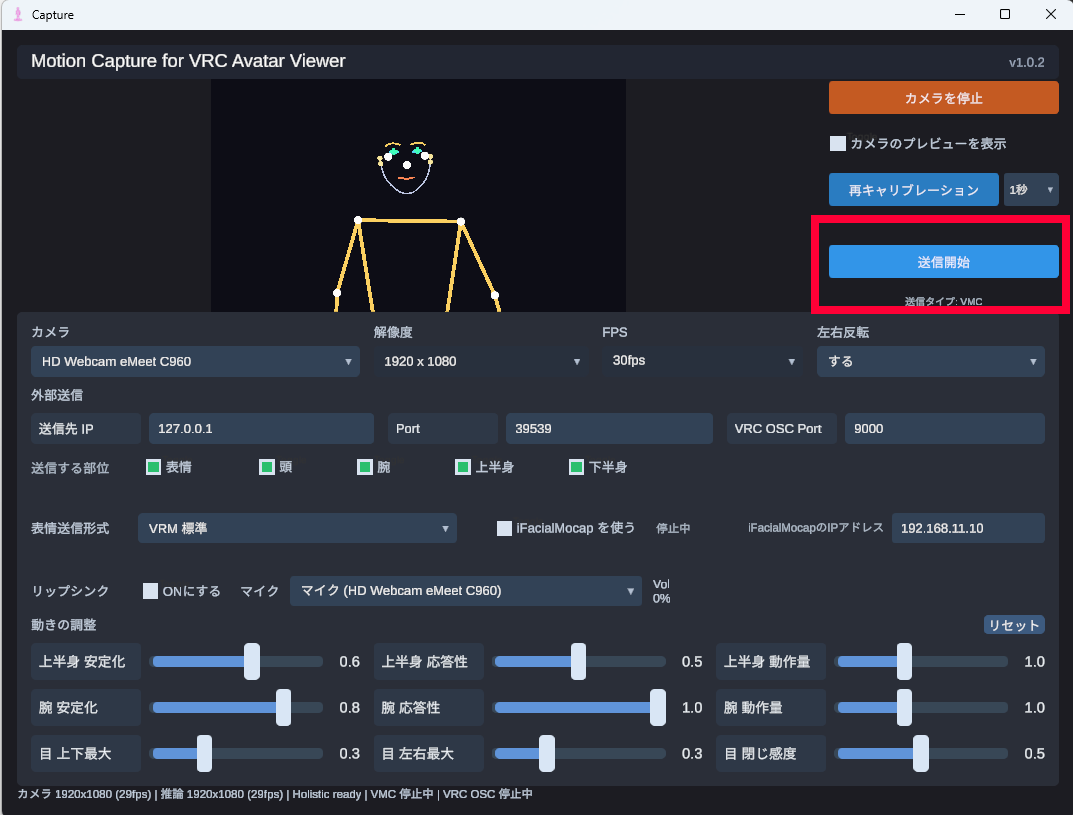

2-2. Capture App Side: Start Sending

- Set the "Destination IP" in the capture app to

127.0.0.1and "Port" to39539 - Click the "Start Sending" button

Your avatar will now start moving in sync with your movements.

- Below the "Start Sending" button, a caption showing "Transmission Type: VMC" or "Transmission Type: VMC + VRChat OSC" will appear. This indicates the currently active transmission protocol

- The caption changes depending on the facial expression transmission format selected (VRM Standard / Perfect Sync / VRCFT). Only when VRCFT is selected will face tracking data be sent to VRChat OSC in addition to VMC

- For details on facial expression tracking, refer to the Face Tracking or VRCFaceTracking Integration guide

- If movements feel stiff, try switching the "Motion Smoothing" preset (6 levels) to find the right balance between responsiveness and smoothness

2-3. When Things Don't Work

- Avatar is not responding Check that VMC Reception is ON in VRC Avatar Viewer, and that the IP address and port on the capture app side are correct

- Movement freezes Check that you are visible in the capture app preview and that tracking is working

- Poor movement quality If your clothing and background are similar in color, tracking accuracy will noticeably decrease. In particular, avoid wearing clothing that is a similar color to the floor or walls. Colors that look different to the human eye can appear nearly identical in camera footage. Clothing with strong contrast against the background is recommended.

2-4. Sending from a Separate PC

If you want to use separate PCs for capture and streaming, set the "Destination IP" on the capture app side to the IP address of the PC running VRC Avatar Viewer. Make sure to allow inbound UDP 39539 traffic through the firewall on the VRC Avatar Viewer PC. A wired connection on the same LAN is recommended.

2-5. Other Notes

これはほかの配信者さんにもお伝えしたいのですが、トラッキングを安定させるために、カメラ性能よりも「画角」と「服」が大事でして、

— Rob_VRC (@Rob_VRC) April 22, 2026

・肘と腕が入るくらいの画角にする(添付画像くらい)

⇒ トラッキングの安定性が上がります

・腕が出る服(半袖とか)を着る

⇒… pic.twitter.com/u5SXE0SPa9

Face Tracking

Face tracking is covered in separate guides.

- Face Tracking (Webcam / iFacialMocap) How to capture facial expressions using a webcam or iPhone (iFacialMocap) with the capture app

- VRCFaceTracking Integration How to send facial expressions directly to the viewer app from VRCFaceTracking, the de facto standard for face tracking in VRChat (Quest Pro / Vive Facial Tracker, etc.)

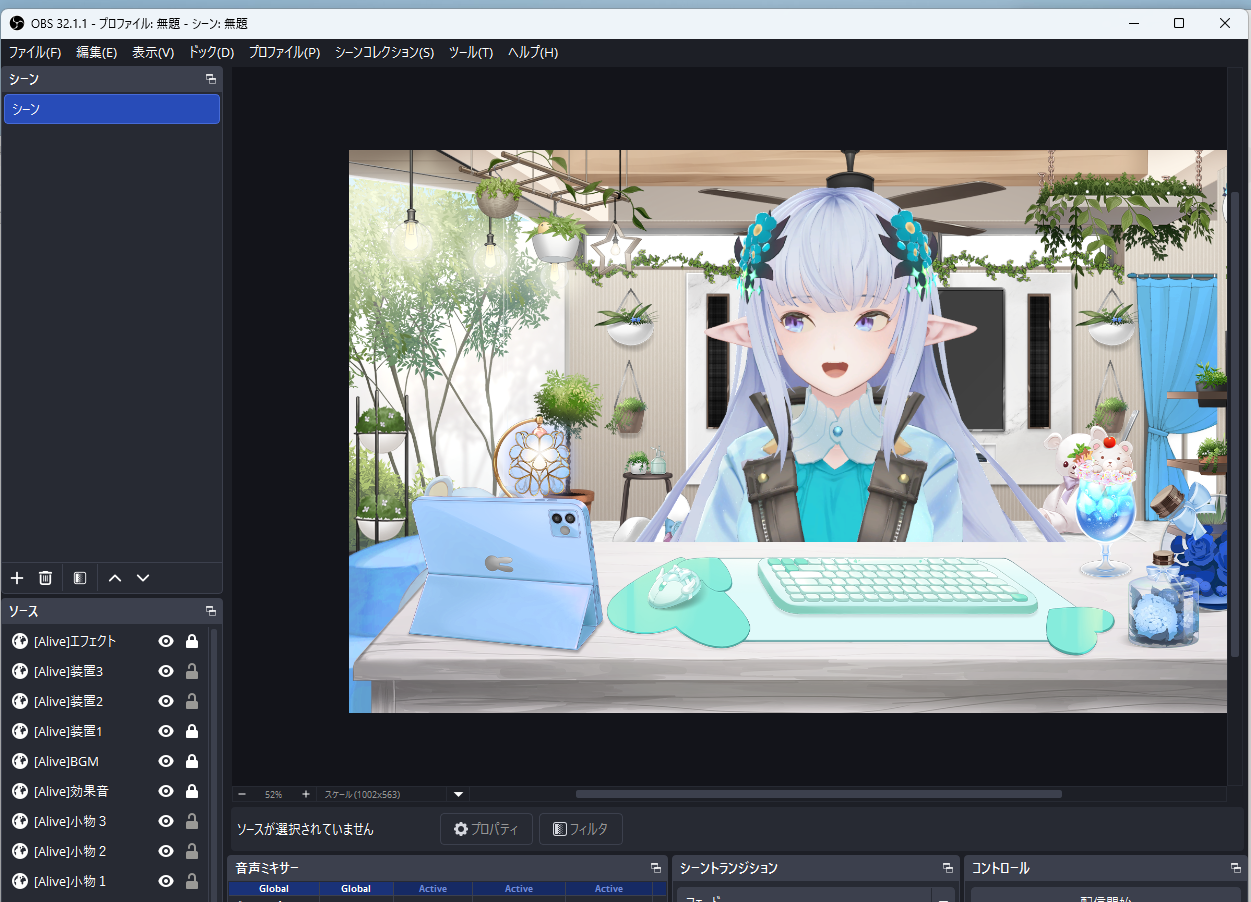

Capture Your Avatar in OBS Studio

There are two ways to use VRC Avatar Viewer as a streaming source in OBS Studio (hereafter OBS). Using Spout2 is recommended for superior image quality and performance, but you can also use the "Window Capture" method, which requires no plugin installation.

Method A: Output to OBS via Spout2 (Recommended)

Spout is a mechanism for directly sharing video between applications on Windows. It allows you to pass your avatar to OBS with high quality and low latency.

- Prepare OBS Install the Spout2 Plugin for OBS Studio and restart OBS

- VRC Avatar Viewer side Open Streaming Mode and turn Spout output ON

- OBS side Add a source, select "Spout2 Capture", and choose the output being sent from VRC Avatar Viewer

- Spout can also pass the alpha channel (transparency information), so you can composite the avatar in OBS with a transparent background

Method B: Transparent Background Mode + Window Capture

This is suitable when you prefer not to install plugins, or when you also want to use the avatar as a desktop mascot.

- Turn ON Transparent Background Mode from the VRC Avatar Viewer toolbar (the background disappears, leaving only the avatar)

- In OBS, add a "Window Capture" source and select the VRC Avatar Viewer window

- Use cropping and size adjustments to position the avatar as desired

- You can still control the avatar's camera with the mouse while in Transparent Background Mode